AI Red Teaming

Proactively expose critical LLM vulnerabilities

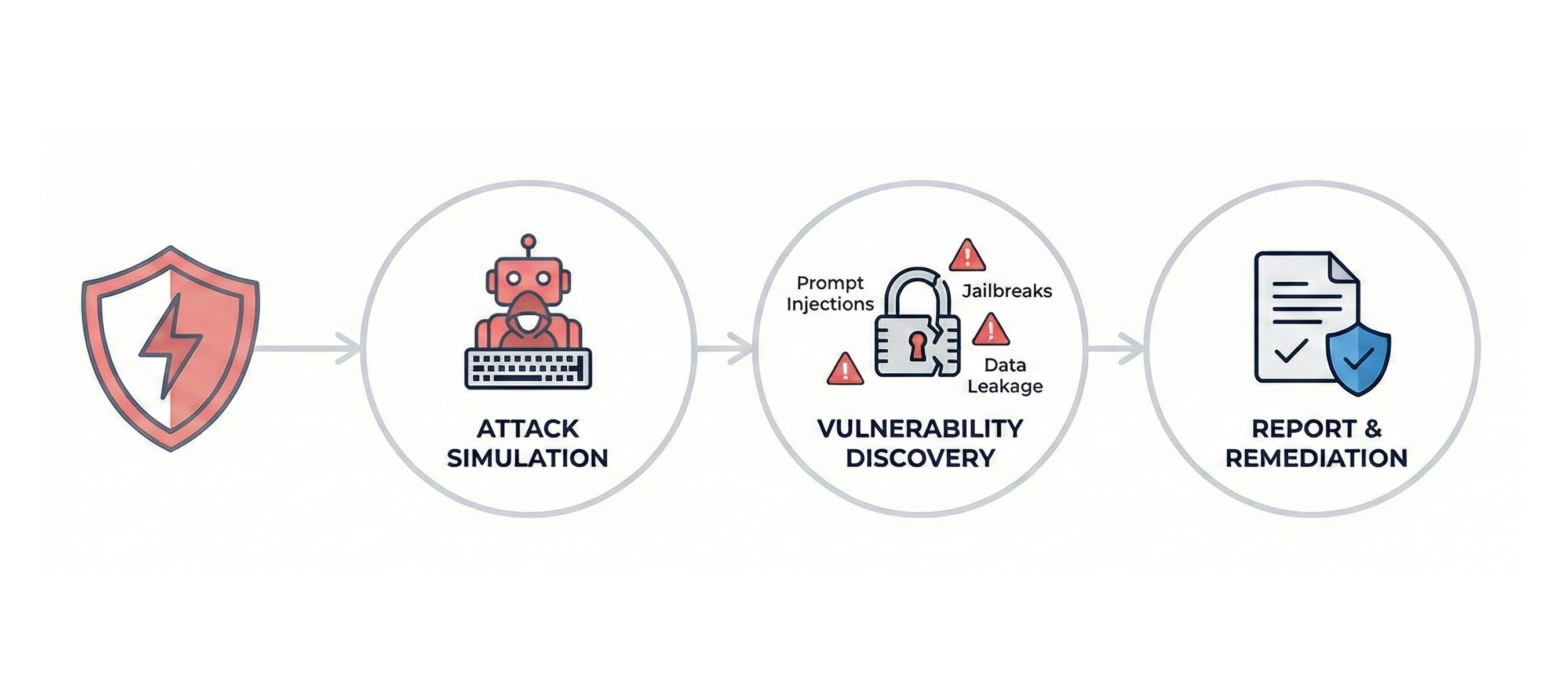

Aggressively stress-test your language models to uncover prompt injections, system jailbreaks, and hidden data leakage.

Get Started

Please fill out the form, and one of our team members will get in touch shortly.

Operationalizing Red Teaming

Threat Modeling

Identify critical attack vectors and define adversarial testing scopes for specific enterprise LLM deployments.

Adversarial Simulation

Execute attack scenarios to expose hidden prompt injections, jailbreaks, and critical data leaks.

Vulnerability Remediation

Deliver comprehensive remediation and technical reports mapped OWASP AI Top 10.